We performed a systematic review to synthesize evidence on prognostic factors for physical functioning after multidisciplinary rehabilitation in patients with chronic musculoskeletal pain (Tseli et. al, 2019) and used a tool, QUIPS, which was developed specifically for evaluation of study quality of prognostic studies (Hayden, et al 2013). We have evaluated this process in our article “Elaborating on the assessment of the risk of bias in prognostic studies in pain –aspects of interrater agreement” (Grooten et. al, 2019), and have put together the learnings we gained from this experience.

Prognostic research

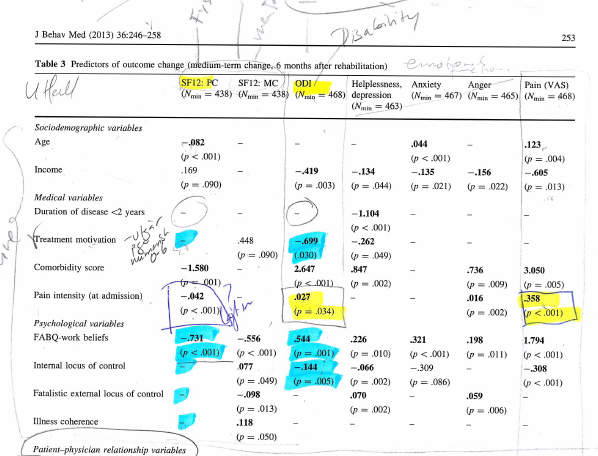

Knowledge on prognostic factors can indicate the course of a certain condition and help clinicians to make appropriate treatment decisions based on the prognosis for separate groups of people. However, in the field of pain rehabilitation, the literature reports diverse information on specific factors and there is little consensus on what factor is important for the prognosis. It is therefore of great interest to perform a systematic review of existing data, to look at whether a potential prognostic factor is associated with treatment outcome and to estimate the strength of the association through a meta-analysis. In this process, it is necessary to assess the quality of the included papers in order to decide if and to what extent a paper may contribute to the review (and synthesis). This process is called risk of bias assessment (RoB).

The QUIPS tool

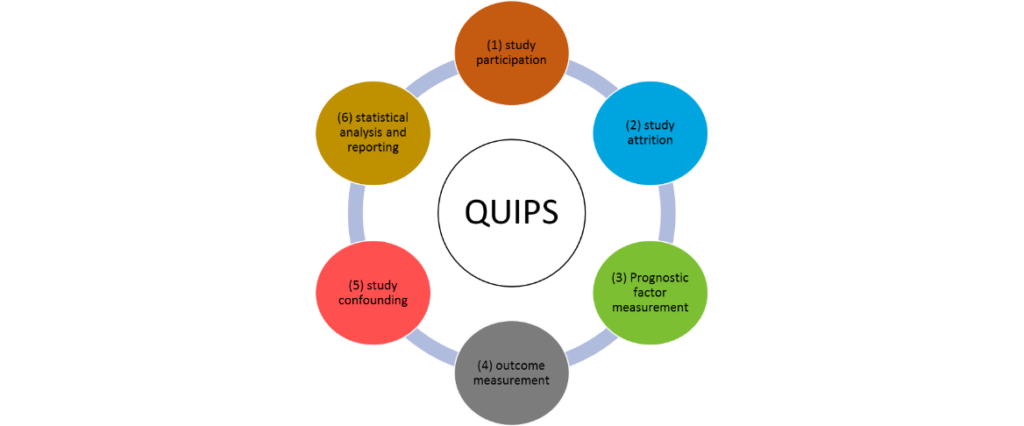

The Cochrane Prognosis Methods Group recommends the use of the Quality In Prognosis Studies (QUIPS) tool to assess RoB in prognostic factor studies. The QUIPS tool uses six important domains that should be critically appraised when evaluating validity and bias in studies of prognostic factors: (see figure 1).

Each domain includes multiple items that are judged separately. Based on the ratings of the included items, a conclusive judgment of the risk of bias within each domain is made and expressed on a three-grade scale (high, moderate or low RoB). Preferably, the RoB – assessment is made by at least two persons independently. The results from these ratings and especially non-agreement are then the basis for discussion and a final consensus is made. The degree of interrater agreement was evaluated by Kappa statistics.

Strengths and limitations of QUIPS

The QUIPS tool facilitates a structured assessment and the worksheet is easily adapted for specific needs, which is helpful when disagreements are to be resolved. We believe that the foremost gain of such a systematic process is that it initiates a discussion on important validity aspects. For instance, in our field the outcome and prognostic measures are often established instruments, where as we have much more problems with response rate and attrition (as we evaluate long term effects) and statistical approaches vary a lot. The final judgement should be the result of these informed discussions as the assessment is not merely deterministic, but also to some degree subjective. When we used QUIPS in the research field of chronic pain and complex interventions, we came up with a rather weak agreement in our judgements of RoB of individual domains. In our second set of RoB assessment, we were more prepared from the dilemmas we had previously encountered and dissolved in the first round. As a result, our agreement improved. Whether it is easy to have agreement or not depends partly on which type of study is assessed; some studies investigate specific delimited factors and outcomes, whereas more complex contexts may require a lot more preparatory work.

Tips for researchers

In our paper, we have provided tips that can be used for researchers using QUIPS in the field of rehabilitation medicine. Among these tips, we first highlight the importance of agreeing on the judgment of the prompting items in advance, an initial pilot on 2-3 articles can help identify areas in need of clarification. Secondly, plan for an early tuning-discussion with the team (after 5-10 articles) to help resolve dilemmas and disagreements that have arisen in praxis. Finally, calculate Kappa early, as a way of identifying domains that may be in need of harmonization.

Assessing the study quality of > 40 studies within our SR was challenging but also very rewarding. We deepened our overall understanding of the topic and of the many existing challenges in pragmatic prognostic research. It was a valuable way to become more familiar with the included studies before the synthesis. We would encourage researchers to also have a look at QUIPS when performing their own prognostic research in order to prevent insufficient reporting and common methodological pitfalls.

Comments