We are all aware of how poorly we feel after a bad night’s sleep. For many, this is a regular occurrence as a result of life style, work pressure or disease. Chief among the latter are sleep apnea, periodic movements and insomnia, which collectively affect more than a quarter of the population.

Apart from the effects of poor sleep on sense of well-being, work performance and cognitive function, poor sleep is a risk factor for many common and serious disorders including hypertension/cardiovascular disease, diabetes, mood disorders, obesity, and overall mortality. The impact of sleep disorders on general health and the economy is, accordingly, huge.

Sleep evaluation

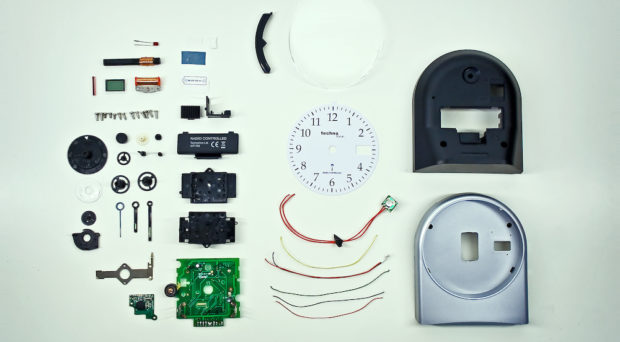

As currently practiced, proper evaluation of sleep entails recording of brain activity (EEG) from multiple sites for several hours. This is typically done under supervision in sleep laboratories equipped with complex monitors. The patient sleeps (or tries to) in an unfamiliar environment while being connected to equipment with numerous wires.

The sleep study generates huge amounts of data that must be distilled into a manageable summary. This task, called scoring, is performed manually by highly trained technologists who review the data in 30-second epochs (800-1000 epochs per study), a task that typically takes 1-3 hours depending on the complexity of the record.

The basic guidelines for this “manual” scoring were written by Rechtschaffen and Kales (R&K rules) fifty years ago, when brain signals were recorded by ink pens writing on paper and microprocessors had not yet been invented. The scorer simply had to distinguish wakefulness from sleep, decide if sleep is REM (dreaming stage) or non-REM and, if non-REM, determine if it is light (stage 1), intermediate (stage 2) or deep (stage 3). The rules were very simple, mostly qualitative and based on pattern recognition. This left much room for subjective interpretation.

Changing times

The R&K rules were appropriate for the time they were written. In the interim advances in digital technology and signal processing revolutionized almost every aspect of our lives. It is now possible to digitally analyze the sleep study in <2 minutes and produce the same R&K stages and a host of other information that cannot be captured by manual scoring (sleep micro-structure).

Also in the interim, much laboratory research using modern signal processing techniques was done to determine how to better characterize sleep quality and the possible function(s) of several microstructure features. These studies indicate that evaluating sleep microstructure may provide an explanation for symptoms/disorders that cannot be currently explained, for example non-restorative sleep, poor memory/cognition, excessive sleepiness, despite normal (by R&K rules) sleep.

Notwithstanding all these advances, the only benefit to sleep studies from this digital revolution has been conversion of data format from ink on paper to fancy digital displays and more efficient data storage and retrieval. The medically-helpful information we get has hardly changed. WHY?

Time to advance sleep analysis?

The medical profession is, justifiably, very conservative; no new technology/medication is introduced in clinical practice until it is scientifically proven to be safe and effective/reliable. The new technology must also offer some advantage over current practice in the form of greater effectiveness, economy or efficiency in service delivery.

For digital analysis of clinical sleep studies the advantages are faster, more consistent and cheaper analysis than visual scoring and the additional microstructure information it provides. The main barriers, however, have been proving that it is as accurate as manual scoring in implementing R&K rules and lack of convincing evidence that microstructure is clinically useful.

As I argue in a review in Sleep Science and Practice, the use of manual scoring as the gold standard against which automatic systems are judged is unfortunate and no longer justified. There is considerable, well documented variability in how technologists score sleep stages. In a recent study we had sleep records scored three times by each of two technologists and once by a third technologist; a total of seven scoring sessions. When stage 1 was scored in at least one session the probability of a unanimous stage 1 score was <10%. For stage 3 the probability was 25%. Perhaps it is time to move away from the R&K staging system.

It is ironic that a difference in up to 20% of epochs (»150 epochs/record) in the stages assigned by two technologists is considered acceptable but a difference between the automatic and a single scorer in a few epochs is considered unacceptable. Unlike the case with other new technologies where a single physician cannot independently evaluate the technology and results of clinical trials are accepted, in the case of scoring sleep every technologist or physician considers himself an expert and any difference between his score and the automatic score is considered an error.

Given that experts cannot agree with each other on the stage in a large percent of epochs, it is inevitable that every expert will find many epochs staged “incorrectly” by the automatic system. Thus, there will be total agreement among experts that the automatic system is inaccurate, and hence should not be used, even when agreement between the automatic score and the average score of ten experts was shown in large studies to be similar to or better than agreement among the ten experts.

In the same review I argue for including digital EEG analysis in every clinical sleep study. Not only would it provide consistent and fast evaluation of sleep quality but, given that >10,000 clinical sleep studies are performed daily, it will be possible to rapidly and very cheaply evaluate the clinical usefulness of various promising microstructure features. If we wait for scientists to perform expensive dedicated large scale research studies to determine the clinical utility of each microstructure feature before it is incorporated in clinical studies progress in treating sleep disorders will, unnecessarily, be greatly delayed.

Maybe I’m looking at this the wrong way, but I find it really weird that there’s so much pushback against digital sleep analysis. I understand the need for accuracy, by all means, and there’s probably still some benefit to having the human element involved (even if I do secretly hope the process extends a little past “someone watching me sleep and writing down what happens”), but it really seems like a lot of people are worried that their job or expertise might get called into question if the digital tracking takes off? Surely there’s some happy medium that can be reached between the two methods, even if the digital process still needs some analysis and review. I guess I just want to speed it along so maybe we can start helping more people sooner, given how widespread sleep disorders are becoming in the age of computer-facing employment and smart phones.