“There is no such thing as public opinion. There is only published opinion” – Sir Winston Churchill

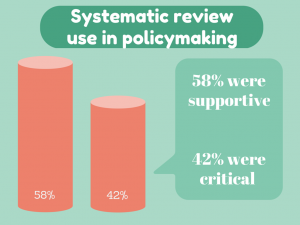

Should systematic reviews be used to inform policymaking? The debate for and against can get quite heated. New research published yesterday in Systematic Reviews indicates that those who are critical of using systematic reviews in policymaking are more than twice as likely to have pharmaceutical, tobacco or insurance industry ties compared with those who support their use.

Evidence-based policy has a simple aim; use evidence to inform policymaking.

Evidence-based policy has a simple aim; use evidence to inform policymaking.

The use of systematic reviews has become increasingly common. Instead of having different pieces of evidence scattered across different platforms, systematic reviews allow researchers to summarize the evidence into a form that can be used by decision makers.

However, as with most things, the value of systematic reviews to inform policymaking is greatly debated.

Susan Forsyth and colleagues’ study, published yesterday in Systematic Reviews, searched for opinion pieces on the value of using systematic reviews in policymaking and assessed whether funding sources or financial conflicts of interest were associated with the direction of the author’s opinion.

Not surprisingly, the study found that those critical of using systematic reviews in policymaking were over twice as likely to have industry ties as those who were supportive of their use. Whereas those supportive of systematic review use were more likely to be affiliated with government.

Debate relating to the methods

When it came to the reasons behind the opinions, the majority of arguments against the use of systematic reviews in policymaking were methods-based.

| Arguments for systematic review use | Arguments against systematic review use |

| Well conducted reviews increase transparency | Cherry picking studies for inclusion |

| Well conducted reviews reduce bias | Magnification of publication bias |

| Well conducted reviews have comprehensive methods | Inclusion of methodologically flawed studies/trials |

| Well conducted reviews provide more complete information than individual studies |

Research questions are too narrow |

There is a theme in the above table. The bases of the arguments critical of systematic reviews in policymaking are dependent on the quality of a systematic reviews method. If a systematic review follows the vigorous criteria of today’s best practice, then these methods-based arguments become less steadfast.

These criteria include publication of a prospective protocol, a clearly defined research question, comprehensive search strategies of published and unpublished research, pre-defined criteria for study inclusion, quality assessment of included studies and reproducible methods.

The EQUATOR Network is home to a comprehensive database of reporting guidelines for health research, including the Preferred Reporting Items for Systematic reviews and Meta-Analyses (more commonly known as PRISMA). PRISMA sets out the gold standard for transparent and complete reporting of systematic reviews.

There are numerous organizations that govern the standards of systematic reviews to ensure high quality for policymaking; for healthcare there is The Cochrane Collaboration, for social care The Campbell Collaboration and for environmental The Collaboration for Environmental Evidence.

Debate relating to use

The quality of the methods behind the systematic review related heavily to arguments for their use in policymaking.

The utility-based arguments stated that well conducted systematic reviews are the highest level of evidence, that they effectively summarize large bodies of evidence, have strong external validity, and are useful at identifying gaps in knowledge and research priorities. Whereas arguments critical of systematic review use state their use is minimal due to methodological flaws.

One theme that spread between supportive and critical articles was concern on the ‘generalizabilty’ of systematic reviews. Disease complexities, along with cultural and socioeconomic variability in different populations, can limit the translation of systematic reviews into policymaking.

What now?

High-quality systematic reviews give the best picture of our current state of evidence on a subject. By including unpublished studies, the effect of publication bias can be minimized; research has highlighted that unpublished studies are more likely to show an intervention as ineffective.,

This concealment is a potential reason behind industry’s argument against systematic review use in policymaking. In order to effectively appraise any article, conflicts of interest must be shared.

Personally, I don’t think that all policy should be based on systematic reviews alone; arguments on the generalizability of systematic reviews are just. But when it comes to condensing all the available evidence into a usable form, I don’t think we have a better alternative. Well-conducted systematic reviews are both rigorous and transparent, and the best summation of available evidence.

From my perspective, evidence should be the foundation of policy decisions, though not the entirety of the structure. The importance of cultural and socioeconomic variables must be incorporated.

Fortunately, researchers are tackling these limitations; frameworks are being developed to consider these variables when applying systematic reviews in policymaking, initiatives such as the Grading of Recommendations Assessment, Development and Evaluation (GRADE) assess the quality of the evidence and strength of recommendations. At the same time, details in the PRISMA statement encourage researchers to state the PICOS (Patient, Intervention, Comparator group, Outcome and Study design), which aims to increase the applicability of systematic reviews.

It is only by combining all the information available that we can make informed decisions in policymaking.

Comments